We built systems that can read, write, extract, summarize, automate — basically everything except decide what happens next. So the workflow looks fast, even impressive… until it hits that invisible wall where someone has to step in and think.

And that’s where the illusion breaks.

Because no matter how many tools you stack — copilots, automations, dashboards — the outcome still depends on humans stitching everything together. The system does the steps. The team carries the responsibility.

That’s exactly the gap agentic AI is trying to close. You give it a goal, and it figures out how to move forward across tools, across data, across edge cases until the job is actually done.

This is why it suddenly feels like a must-have, not a nice-to-have.

TL;DR

- What it is: Agentic AI for business means AI systems that plan, reason, and execute multi-step workflows autonomously — far beyond rule-based automation.

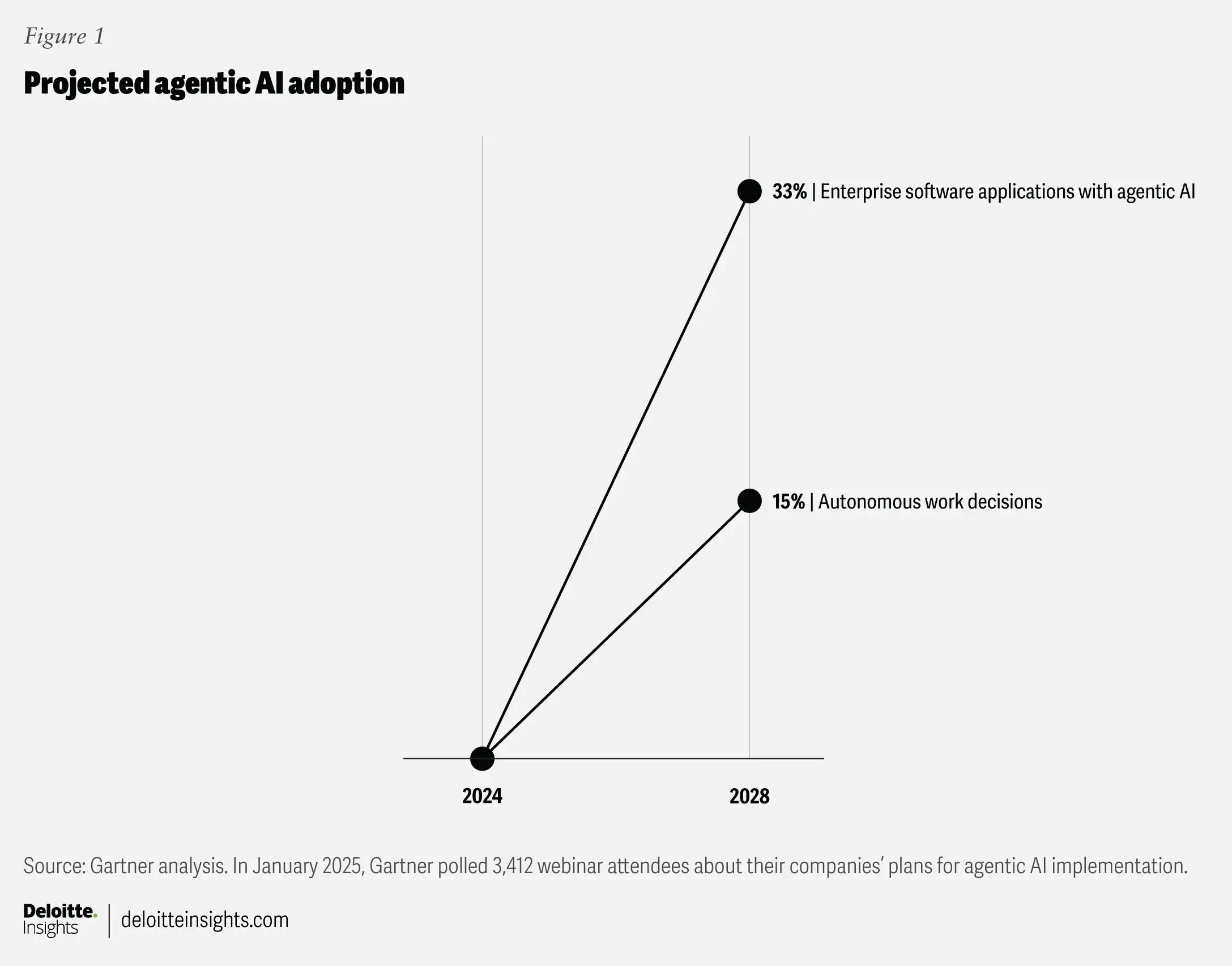

- Why it matters: The momentum is undeniable that 15% of day-to-day work decisions will be made autonomously through agentic AI by 2028, up from none in 2024, while 33% of enterprise software applications will include agentic AI by the same timeframe.

- Key challenge: 79% of organizations are experimenting; only 11% run agentic AI in production. That gap is an architecture problem, not a technology problem.

- Key solution: Production-grade agentic AI requires orchestration design, legacy system integration, governance as infrastructure, and an org-level design shift.

- Expected impact: Enterprises running agentic AI at scale average 171% ROI — roughly 3x traditional automation.

What Is Agentic AI for Business?

Here is the short version: agentic AI for business is the difference between a tool that executes instructions and a system that completes goals. And that distinction changes everything about how you design, deploy, and govern it.

A traditional automation tool follows a script. Same steps, same order, every time. When something unexpected happens, it stops and waits for a human.

An agentic AI system reasons. It receives a goal — not a script — and decides how to pursue it. It uses tools, queries data, delegates subtasks, recovers from errors, and returns a completed result. The system handles the complexity, not your team.

In practice, that means systems that can route and resolve support tickets without a human in the loop, extract and validate documents across multiple form types, monitor infrastructure and trigger incident response autonomously, or analyze procurement data and execute decisions within defined parameters. Not only one agent, we need multi-agent architectures work under the hood.

The shift is from automation that executes tasks to agents that complete goals. That distinction is what makes agentic AI genuinely different — and genuinely harder to deploy correctly.

How Agentic in Business Workflows Actually Work

Before you can deploy agentic AI effectively, your team needs to understand what is actually happening inside the system. Not at the model level — at the workflow level.

Every production-grade agentic workflow runs on four operational layers:

Layer 1 — Perception (What the agent reads)

The agent receives input from your business environment: a support ticket, a document upload, an alert from your monitoring system, a data change in your CRM. The quality of this input determines everything downstream. Garbage in, cascading failure out.

This is why data quality and system integration are prerequisites, not afterthoughts. Agents that cannot reliably read your environment cannot reliably act in it.

Layer 2 — Planning (What the agent decides)

Here is where agentic AI diverges from traditional automation. Rather than executing a fixed script, the agent constructs a plan. It uses what Andrew Ng, founder of DeepLearning.AI, identifies as four core reasoning capabilities: Reflection (evaluating its own outputs), Tool Use (calling APIs, databases, external systems), Planning (sequencing multi-step actions toward a goal), and Multi-Agent Coordination (delegating subtasks to specialized agents).

In a document processing workflow, planning looks like this: the agent receives a tax form, identifies the form type, retrieves the relevant extraction schema, queries the client record, decides whether confidence thresholds are met, and routes the output accordingly — all before any human sees it.

Layer 3 — Execution (What the agent does)

The agent acts. It calls tools, writes records, triggers downstream systems, sends notifications, or escalates to a human checkpoint — depending on what the plan requires.

This is the layer where integration architecture matters most. An agent that can plan perfectly but cannot reach your legacy CRM, your document management system, or your ERP is operationally useless. The execution layer is only as good as the integrations underneath it.

Layer 4 — Verification (What gets checked)

Every well-designed agentic workflow has a verification step — a point at which the agent (or a separate verification agent) validates its own output before the result is committed. Did the extraction match the expected schema? Did the decision fall within authorized parameters? Was the confidence score above threshold?

Verification is what makes governance practical rather than theoretical. It is the mechanism by which “human oversight” gets operationalized at scale — not by humans reviewing every action, but by the system flagging the actions that actually need review.

What Matters Operationally

Understanding the four layers is the foundation. What most teams underestimate is how much the operational reality of running agentic AI differs from running traditional software – it’s a different game entirely. And most teams don’t yet “have the maturity” to play it.

- Your team’s job changes. In a traditional automation environment, engineers build scripts and operators monitor dashboards. In an agentic environment, engineers design decision boundaries and operators manage exception queues. The volume of human decisions does not disappear — it concentrates. Your team handles fewer decisions, but each one is higher-stakes.

- You need a new definition of “working.” Traditional software either works or it doesn’t. Agentic systems exist on a confidence spectrum. An agent that resolves 87% of support tickets autonomously is not “broken” because it escalates 13%. It is working exactly as designed. Your operational metrics need to reflect this — measuring autonomous resolution rate, exception quality, and escalation accuracy, not just uptime.

- Human-in-the-loop is a design decision, not a default. The question is not whether humans are involved. It is where — at which confidence threshold, for which decision types, with which response time. A well-designed agentic system routes low-confidence outputs and novel edge cases to human review automatically. Everything else flows through. The ratio shifts over time as the system builds a track record.

- Failure modes are different. Traditional software fails loudly — an error, a crash, an obvious wrong output. Agentic systems can fail quietly — producing plausible but incorrect outputs, looping on edge cases, or compounding errors across a multi-step workflow. This is why auditability is not optional. Every agent action needs a log. Every decision needs a trace.

Key Insights on Agentic AI for Business

Insight 1: The Adoption-to-Production Gap Is the Real Crisis

The number that matters most is not market size or average ROI. It is the gap: 79% adoption, 11% production scale (Landbase). Seven in eight organizations experimenting with agentic AI have not made it to production.

Three failure patterns explain most of the gap:

- Pilot environments are clean. Production is not. Real workflows have edge cases, inconsistent data, and legacy dependencies that pilots never encounter. Agents that perform in demos break down in week one of live deployment.

- Agents expose broken process design. “AI can’t fix a broken business process.” Agents accelerate whatever they are attached to — including the broken parts.

- Handoff architecture is missing. The failure point is rarely the model. It is the transition between agent output and the next workflow step — where formats don’t match, approvals break down, and no one designed a human checkpoint that could handle agent volume.

Successful AI agent implementation starts with architectural thinking, not better prompts. See how we approach production-grade AI solution at GIANTY.

Insight 2: Multi-Agent Orchestration Is Where Complexity Lives

Single-purpose agents are relatively straightforward. The complexity explodes when you connect them.

In naive multi-agent implementations, coordination overhead consumes 90%+ of system resources (MachineLearningMastery, Security Boulevard). Organizations moving from single to multi-agent architectures report a 3–5x increase in system complexity. Gartner projects that 40% of agentic AI projects will fail by 2027 specifically due to infrastructure inadequacy at this layer.

The solution is orchestration design:

- Clear task boundaries — each agent owns one decision scope, no overlap.

- Structured handoffs — agents pass typed outputs, not free-text, between steps.

- Explicit fallback paths — when an agent cannot complete a task, it routes to a defined checkpoint, not into a loop.

- Centralized state — a single source of truth for workflow status, visible to agents and human reviewers alike.

This is the unsexy layer. It is also the layer that determines whether your system runs in production or gets quietly shut down after six months.

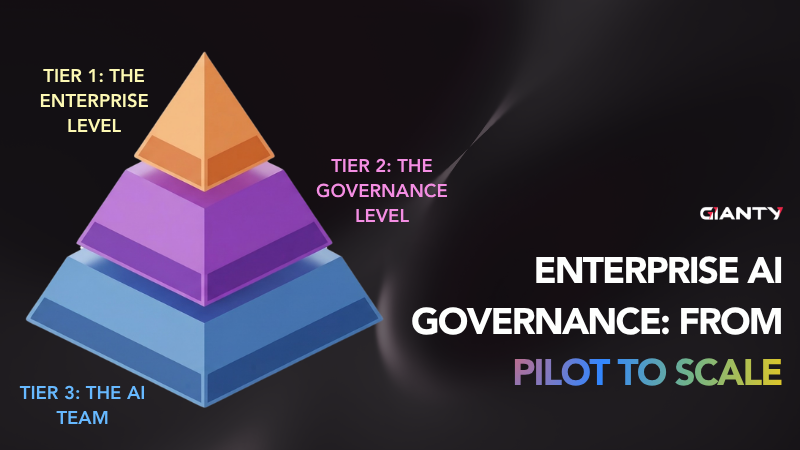

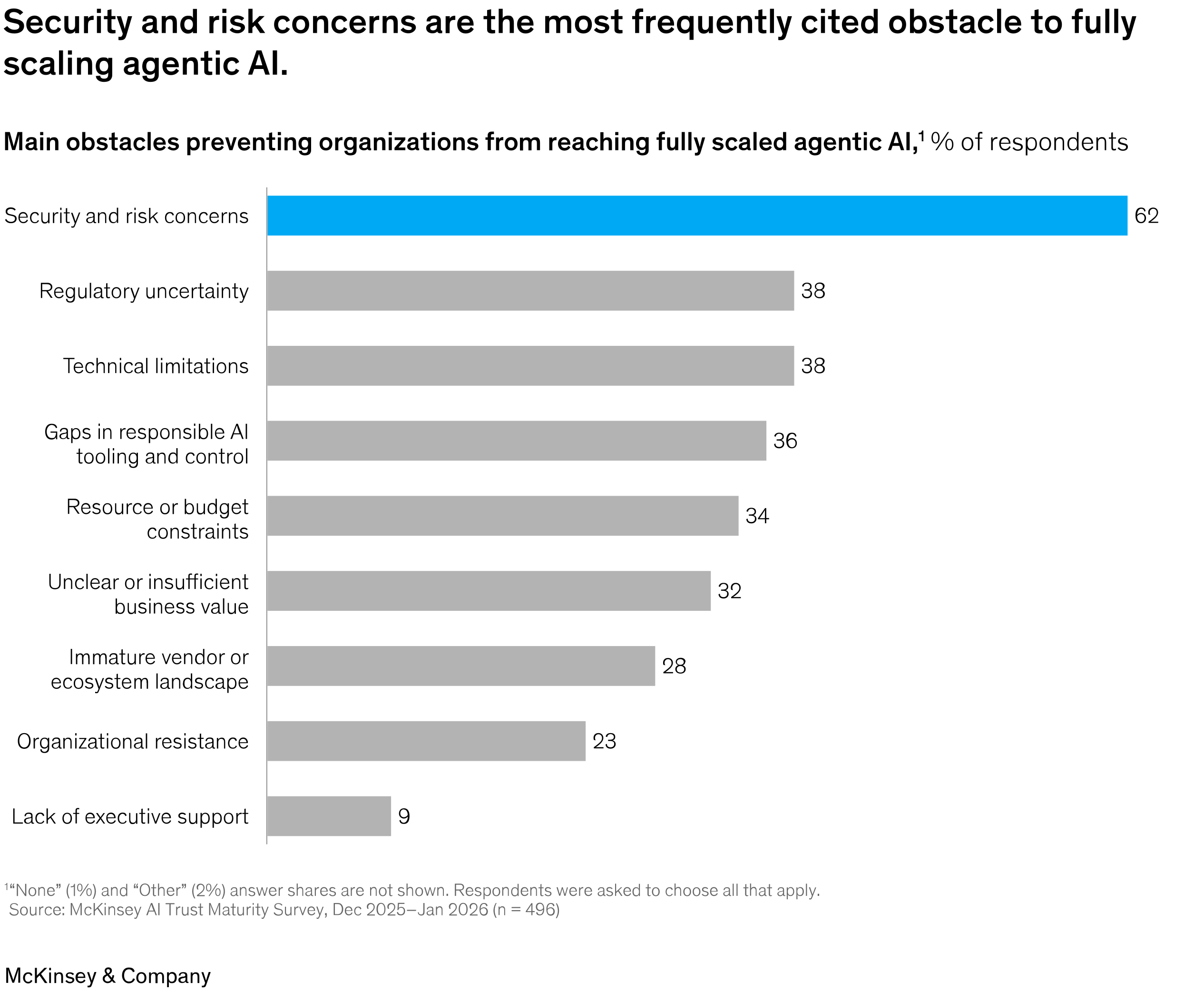

Insight 3: Governance Is Architecture, Not Afterthought

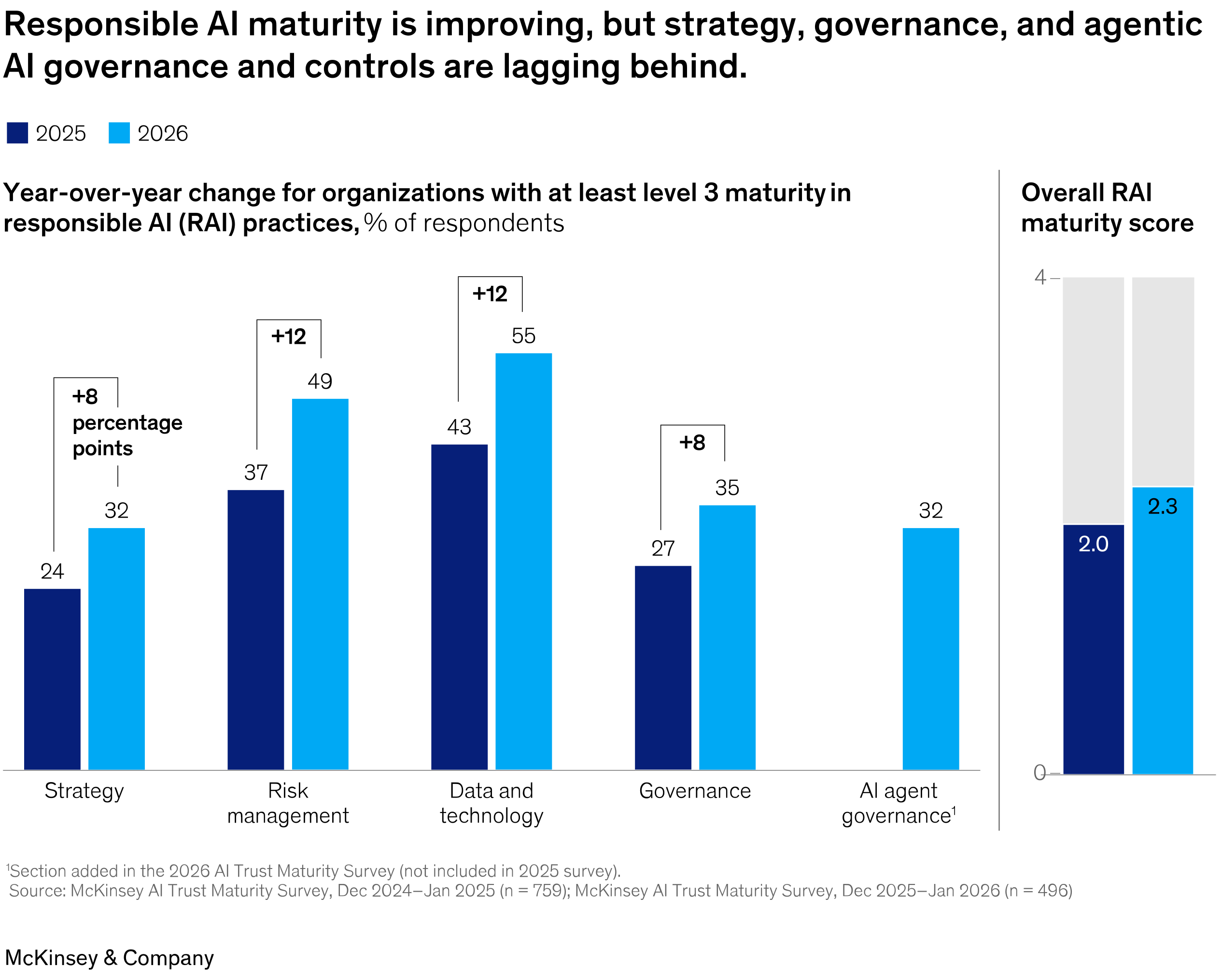

From Mc Kinsey, only 33% of organizations report mature governance capabilities for agentic AI. The other two-thirds are deploying agents faster than they can control them.

Governance built after deployment is expensive, slow, and incomplete. Governance built in from day one costs almost nothing additional — and unlocks the organizational trust required to scale. That means:

- Tiered guardrails: a non-negotiable baseline, risk-tiered policies per decision type, and clear exception escalation paths.

- Runtime controls: policy engines that evaluate actions before execution; least-privilege tool access; kill switches that pause individual agents without halting the workflow.

- Auditability: every agent action logged, every decision traceable. When something goes wrong — and it will — you can reconstruct exactly what happened.

The organizations scaling agentic AI fastest are not the ones moving with the least governance. They are the ones whose governance lets them move without stopping.

Insight 4: Legacy Systems Are Where the Business Value Is Locked

80% of enterprise value sits in legacy systems — mainframes, monolithic databases, COBOL-era applications. Most agentic AI platforms assume modern cloud architecture. Most enterprises do not have it. This misalignment is the primary reason 68% of CTOs cite legacy infrastructure as their top adoption barrier.

The fix is not wholesale modernization. It is deliberate integration:

- API wrapper layers: abstraction interfaces between agents and legacy systems, so agents interact cleanly without understanding the underlying architecture.

- Data bridge strategies: structured extraction into agent-readable formats — unglamorous data quality work that most implementations skip and later regret.

- Incremental surface area: identify the specific data points and decision nodes your agents need, and build only those integrations first.

The most valuable AI system your organization can deploy in the next 18 months probably touches a 20-year-old database. Build your agentic architecture to reach it.

What This Looks Like in Practice

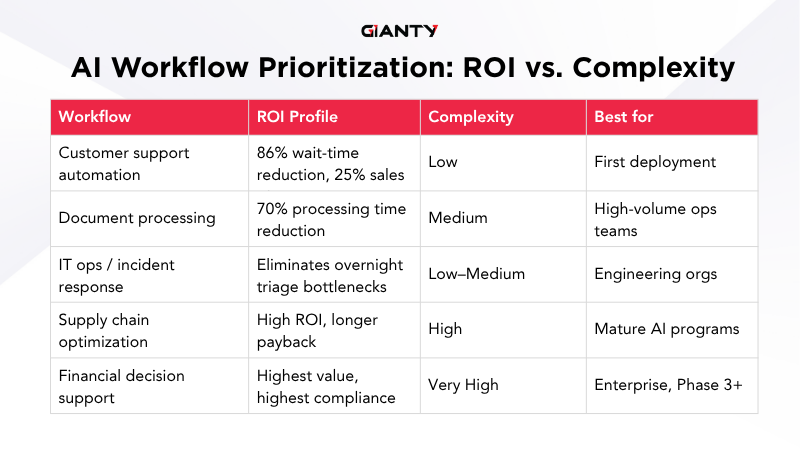

Not all agentic AI deployments are equal. ROI and implementation complexity vary significantly by workflow — which makes sequencing your rollout one of the most important strategic decisions you will make.

The principle: start where ROI is highest and complexity is lowest. Resist the temptation to solve your hardest problem first. Another fact that 42% of organizations report they are still developing their agentic strategy road map, with 35% having no formal strategy at all. That’s why you need a clear roadmap and an AI partner who can map it for you with each specific use case or industry.

Here is what a well-sequenced first deployment looks like. A CPA firm processes hundreds of client tax documents every season. Senior staff spend 60–70% of peak season on manual data entry — not advisory work. Watch our case study here:

A production agentic pipeline changes that: Form Detection → Document Splitting → Field Mapping → Extraction → Validation → Excel Output. Human review enters at one checkpoint (flagged exceptions), not seven. Result: 90%+ extraction accuracy across 14 IRS form types, 70% reduction in processing time, review cycles compressed from 8 weeks to 2–5 days.

Read the full GIANTY AI Tax case study to see how this system was built and what it took to run it in production.

Final Thoughts

In 2026, the agentic AI question is not “does the technology work?” It does. The models are production-ready. The platforms are shipping.

The question is whether your architecture, integration strategy, and governance are ready to run it. And more specifically — whether your team understands what running it actually requires at the operational level.

The four-layer framework (Perception → Planning → Execution → Verification) is not a technical diagram. It is the operational blueprint for every workflow your agents will run. Teams that design around it ship systems that hold. Teams that skip it ship pilots.

For the 89% of organizations still on the pilot side of that gap, the answer is usually: not yet — but the path is clear.

As an AI development company- GIANTY builds production-grade agentic AI system and specialize in the part most vendors skip: integrating agents into real workflows, with real data, inside real enterprise environments — including the legacy ones. If you know the workflow you want to automate, we can tell you exactly what a production system looks like.

FAQs

- What is agentic AI for business? Agentic AI for business refers to AI systems that can autonomously plan, reason, and execute multi-step workflows to accomplish a defined goal — without requiring a human to supervise each action. Unlike fixed-script automation, agentic systems adapt to new information, use external tools, and recover from errors. Examples include autonomous support resolution, document processing pipelines, and IT incident response agents.

- How does an agentic workflow actually work? Every agentic workflow runs through four operational layers: Perception (the agent reads inputs from your business environment), Planning (the agent constructs a multi-step action plan using reasoning capabilities like reflection, tool use, and multi-agent coordination), Execution (the agent acts — calling APIs, writing records, triggering downstream systems), and Verification (the system validates its own output before committing results and routes exceptions to human review). The quality of each layer determines whether the system runs reliably in production.

- How is agentic AI different from a standard chatbot? Chatbots respond — they take one input and return one output. Agentic AI systems act — they take a goal, plan a sequence of steps, execute those steps across tools and data sources, and return a completed result. A chatbot can answer a question about a return policy. An agent can look up the order, initiate the return, update the CRM, and send the confirmation — autonomously, in sequence.

- What changes operationally when you run agentic AI? Three things change immediately: your team’s role shifts from execution to exception handling; your definition of “working” expands to include autonomous resolution rates and escalation quality, not just uptime; and your failure monitoring needs to account for quiet failures — plausible-but-wrong outputs — not just crashes. Teams that build operational dashboards for these metrics before deployment scale far more smoothly than those that retrofit them after.

- Where should we start with agentic AI for business? Start with one workflow that has a clear input, clear output, and measurable success criteria — and where ROI is high relative to complexity. Customer support automation and document processing consistently offer the fastest returns with manageable implementation risk. Map the process fully before touching the technology. Build governance in from day one. Get one system running cleanly in production before expanding scope.