A few months into an AI rollout, many companies start noticing the same pattern.

At first, everything feels exciting.

Marketing uses AI to draft content faster.

Another department starts testing it for internal search.

Operations explores AI-powered workflows.

Customer support wants an assistant.

Leadership sees the potential and asks the obvious question: how fast can we scale this?

It usually starts small.

Then leadership asks the next question: how do we scale this safely?

That is when many companies realize they do not just need AI tools. They need a way to govern them.

Enterprise AI governance is the layer that helps businesses move from scattered AI experiments to AI that can be trusted across the organization. For enterprises, this matters fast. Once AI starts touching internal systems, sensitive data, customer interactions, or business decisions, governance stops being optional. It becomes part of how the business scales AI responsibly.

What is Enterprise AI governance?

Enterprise AI governance is the framework a company uses to guide how AI is adopted, used, monitored, and improved across the business.

In practice, it helps answer questions like:

- Which AI tools are approved?

- What data can AI access?

- Which use cases need human review?

- Who owns the output?

- How do we monitor accuracy, risk, and compliance over time?

We define Enterprise AI governance as the rule book and the referee for enterprise AI, providing structured policies and oversight across the AI lifecycle.

In simpler terms, enterprise AI governance is what keeps AI useful without letting it become chaotic.

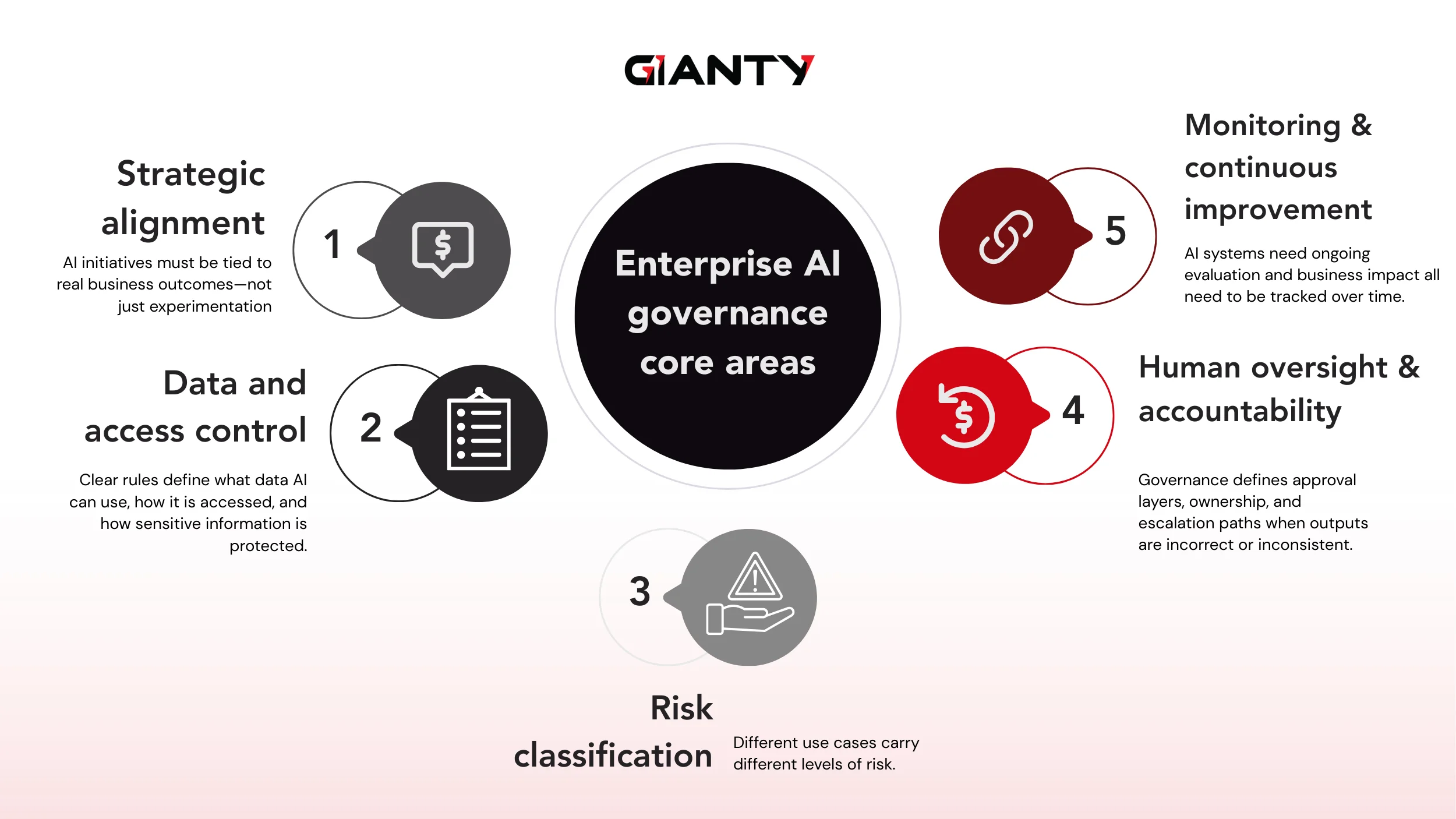

Today, it must address the full AI lifecycle within the business. For enterprises applying AI across multiple teams, business units, and systems, governance should cover five core areas.

Enterprise AI governance is the framework a company uses to guide how AI is adopted, used, monitored, and improved across the business.

In practice, it helps answer questions like:

- Which AI tools are approved?

- What data can AI access?

- Which use cases need human review?

- Who owns the output?

- How do we monitor accuracy, risk, and compliance over time?

We define it as the rule book and the referee for enterprise AI, providing structured policies and oversight across the AI lifecycle.

In simpler terms, enterprise AI governance is what keeps AI useful without letting it become chaotic.

Today, it must address the full AI lifecycle within the business. For enterprises applying AI across multiple teams, business units, and systems, governance should cover five core areas.

Strategic alignment

AI use cases should be tied to real business goals, not just technical curiosity. Governance helps ensure AI investments support measurable outcomes such as operational efficiency, customer experience, revenue enablement, or process improvement.

In practice, the most successful AI initiatives are those that start from a clear business problem. For example, in GIANTY’s AI Tax solution, the focus was not on building a generic AI tool, but on solving a document-heavy bottleneck, reducing tax processing time from weeks to days.

Data and access control

Governance must define what data AI can use, where that data comes from, and how access is controlled.

As AI systems increasingly interact with enterprise data, protocols like Model Context Protocol (MCP) are emerging to standardize how models securely access tools, data, and systems – making governance easier to enforce at scale.

Risk classification

Not every AI use case carries the same level of risk.

A writing assistant and an AI system supporting financial review should not be governed the same way. Enterprises need a structured way to classify use cases and apply the right level of review.

Human oversight and accountability

AI can assist teams, but accountability still belongs to the business. Governance should clearly define where human approval is required, who owns each use case, and how exceptions or errors are handled.

If this level of clarity is difficult to establish internally, it may be time to work with an AI partner who understands both enterprise workflows and governance in practice – not just AI technology.

Monitoring and continuous improvement

AI systems cannot simply be launched and left alone. Enterprise AI Governance must include monitoring for quality, security, policy compliance, usage patterns, and evolving business impact over time.

The Enterprise AI governance risk landscape: what must be controlled

As AI adoption expands, the enterprise risk landscape becomes more complex. To govern AI effectively, enterprises need to understand what exactly must be controlled.

Data exposure and privacy risk

Uncontrolled use of AI tools can lead to leakage of sensitive data—customer information, internal documents, or proprietary knowledge.

Inaccurate or misleading outputs

AI can generate confident but incorrect results. In enterprise workflows, this can impact reporting, customer communication, and operational decisions.

Bias and fairness

AI outputs can reflect underlying bias in data or design, especially in high-impact use cases involving customers or employees.

Security and misuse

As AI connects to enterprise systems, risks extend beyond outputs to actions such as unauthorized access, prompt injection, or unintended system behavior.

Lack of accountability

Without clear ownership and traceability, it becomes difficult to understand how decisions were made or who is responsible when something goes wrong.

Regulatory and compliance pressure

AI is increasingly under scrutiny. Enterprises must demonstrate responsible usage, even as regulations continue to evolve.

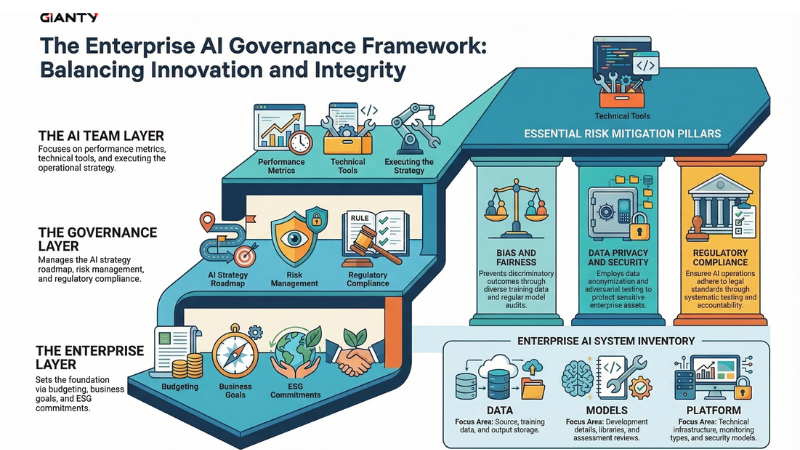

How to structure AI governance at enterprise scale

Enterprise AI governance should be designed to scale, not overbuilt from the start.

The most effective approach is to begin with real use cases, establish practical controls, and evolve governance as adoption grows.

Start small and scale up

Begin with a few high-impact use cases where AI is already delivering value.

These early implementations help define governance in practice, what risks exist, what controls are needed, and how teams actually use AI. As adoption expands, governance can grow with it.

Establish a Enterprise AI governance framework

As AI scales, governance needs to become structured and repeatable.

A strong enterprise model typically includes:

- Executive sponsorship and business ownership

Leadership defines direction and risk tolerance, while each use case has a clear owner. - Cross-functional governance

AI impacts multiple teams, so governance should involve business, IT, data, security, legal, and operations. - Risk-based use case approval

Low-risk use cases move faster. High-risk ones require stricter review and oversight. - Policy, standards, and guardrails

Clear rules define approved tools, data usage, and human oversight requirements. - Technical controls and observability

Governance must be enforced through systems—access control, monitoring, logging, and audit trails. - Training and operating discipline

Employees need clear guidance on how to use AI safely. Governance only works when applied in daily workflows.

Communicate and promote the value

Governance should be positioned as an enabler, not a restriction.

If teams see it as friction, they will work around it. If they understand how it helps them use AI safely and scale faster, adoption becomes easier.

Clear communication, training, and leadership alignment are essential—especially as AI moves beyond experimentation.

Define roles and responsibilities

Governance fails when ownership is unclear.

Each AI initiative should define roles across both business and technical teams:

- Business owners are responsible for outcomes

- Data owners are responsible for access and quality

- IT teams managing integration

- Security and compliance teams are overseeing risk

- AI teams are responsible for implementation and performance

- Governance leads handling oversight and escalation

Clear accountability ensures AI systems are not only deployed but properly managed over time.

Common enterprise pitfalls and how GIANTY helps address them

Most enterprises don’t fail because AI doesn’t work. They fail because AI is not structured to scale.

Here are the most common pitfalls before adopt Enterprise AI Governance:

- Lack of visibility into AI usage: AI adoption spreads across teams without centralized tracking or control.

- Shadow AI and uncontrolled tools: Employees use unapproved tools, increasing data and security risks.

- Governance exists only on paper: Policies are defined but not embedded into real workflows or systems.

- Unclear ownership and accountability: No clear responsibility for AI outcomes, decisions, or errors.

- AI not integrated into enterprise systems: Solutions remain isolated and cannot drive real business actions.

- Inconsistent data access and control: No clear rules on what data AI can access or how it is handled.

- Lack of monitoring and auditability: Limited visibility into performance, usage, and risk over time.

- One-size-fits-all governance: The same level of control is applied to all use cases, slowing adoption or missing critical risks.

- AI disconnected from business value: Use cases are driven by technology, not measurable outcomes.

- Limited trust in AI outputs: Teams override AI decisions due to a lack of reliability or clarity.

- No structured path to scale: AI remains stuck in the pilot stage without a clear roadmap for expansion.

How GIANTY AI Development Service helps

We work closely with enterprises to design, build, and operate AI systems that scale. Our team supports organizations in establishing clear Enterprise AI Governance frameworks, implementing monitoring and control layers, and integrating AI into real business workflows.

- Start with focused POCs (Proof of Concept): Validate real business value quickly with targeted AI use cases before scaling

- Move to production-ready AI development: Build robust, secure, and scalable custom AI solutions designed for enterprise environments

- Integrate AI into real business workflows: Connect AI with core systems (CRM, ERP, internal platforms), so outputs trigger real actions

- Embed governance from day one: Implement access control, human-in-the-loop, monitoring, and auditability as part of the system—not after

- Design for enterprise-scale architecture: Ensure AI systems can expand across teams, use cases, and data environments without losing control

- Enable continuous optimization and performance tracking: Monitor usage, improve output quality, and adapt systems as business needs evolve

A complete Enterprise AI Governance framework combined with AI implementation, enabling systems that are trusted, compliant, and built to scale.

Final thoughts

Enterprise AI Governance is no longer optional. As AI moves deeper into real business workflows, the challenge is not just adoption: it is control, trust, and scalability. Without a clear governance model, AI remains fragmented, difficult to manage, and hard to scale beyond isolated use cases.

The shift is clear: AI is no longer just a tool. It is becoming part of how enterprises operate.

And like any core system, it needs structure – clear ownership, defined processes, and built-in control from the start.

Contact GIANTY to learn how we can help you turn AI governance into a scalable, enterprise-ready capability.

Looking ahead to the next trends in AI? Subscribe to the GIANTY newsletter for expert insights and updates.